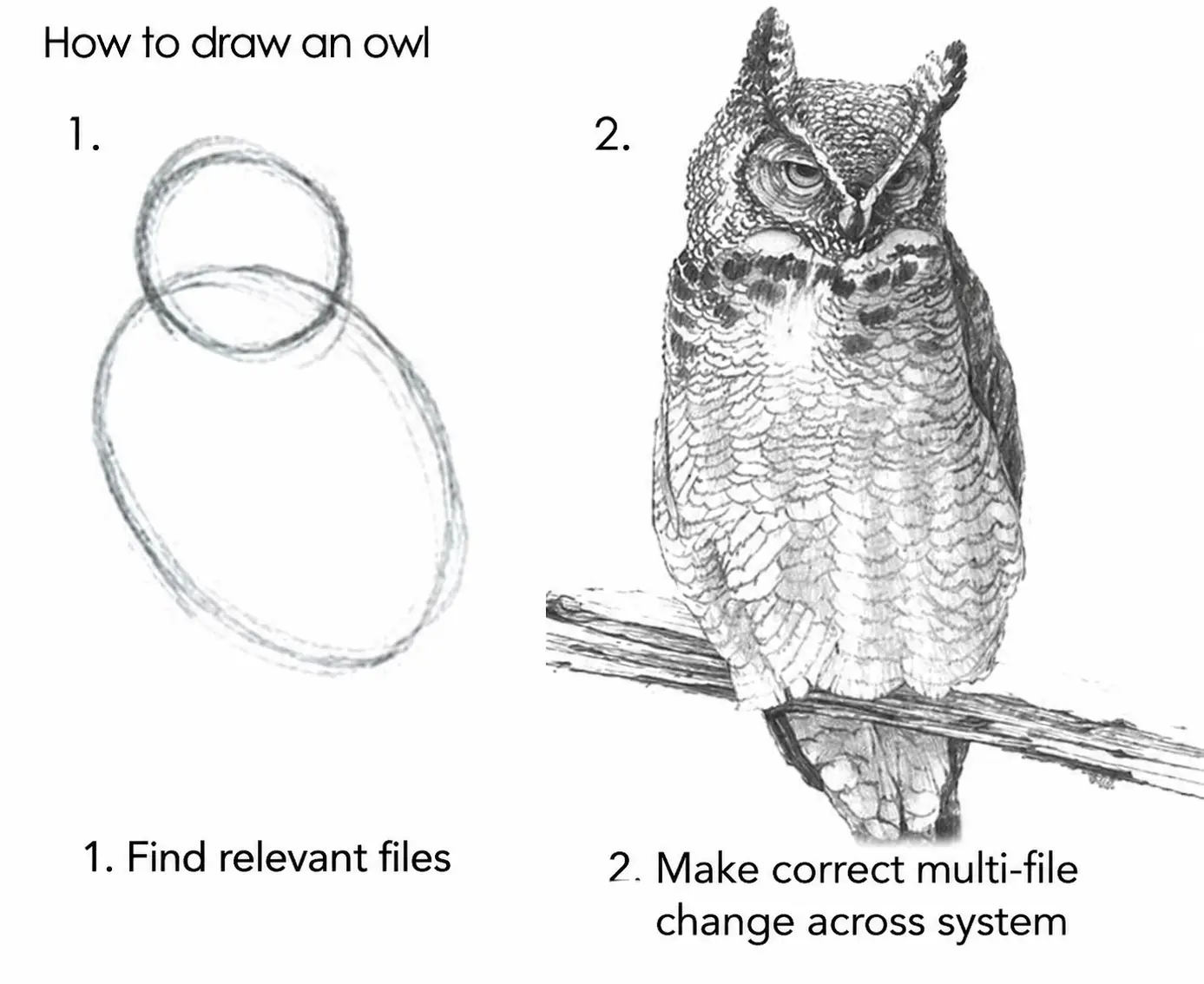

AI Can Find the Code. It Didn't Know How the System Worked.

Why LLMs fail on real codebases

A quick change for a simple feature.

The task was to add a basic UI panel to show a warning, a couple API calls to verify references, and a little data validation.

The hard part? Modifying a complex monorepo sitting at the core of our business. Not large like “been working on it for a while”, but large like thousands of contributors going back to the second Bush Administration. Even worse, the sections of code I’d be working on laid dormant for nearly 10 years. But this wasn’t the problem, it was something else: the solution depended on information that wasn’t locally visible.

The expectation was simple: AI would entirely compress the onboarding and we’d ship something fast. Get in and get out, and let the AI do the work.

I jumped right in to creating an implementation plan, resolving classes to specific files, and decomposed the plan into small verifiable steps. The context soon filled up, but running the AI coding agent in a sub-folder unblocked me. Now, instead of fixing things, the AI agent was using libraries incorrectly, modifying the wrong files, and sometimes inserting nonsensical changes.

The implementation plan hadn’t just gone off the rails, it never found the rails to begin with.

The suggested fixes were in correct place, but the agent made category errors about how the application worked. Template re-rendering, dependency injection registry, missing surface area— all a problem.

The agent found the files. But it didn’t know how the system worked.

AI Non-obviousness

The fix wasn’t in the code the agent was looking at. It was somewhere else in the system.

That’s what I mean by non-obviousness: the degree to which the information required to solve a task is not directly specified and must be discovered, selected, and composed from a large search space.

A quick pilot study

To understand LLM coding agents failures, I used Claude Sonnet 4.6 to run a quick pilot. Sonnet 4.6 is close to what my team uses in practice, not the best, but representative of AI agents in the wild.

For the repo, I picked Jenkins. It’s 2 million lines of code, and is architecturally similar to my work. I found 21 bug fix commits with at least one test that modify source files.

For each test, I ran the following loop:

- Checkout the commit before the bugfix

- Add in the new test and confirm it fails

- Prompt LLM to fix the issue

- Re-run the test and record the result

I used two different prompts: a full description of the commit with specific files, and just issue description from the bug tracker.

Results: For the full description, Claude was able to fix 61.9% of the bugs, and for just the issue description, 57.1%, with overlapping results for all but one bug.

I expected this to be a code search problem, but even when given the exact files, the model failed in the same way. None of the results were hallucinations: the code changes were coherent, they simply lacked understanding of concepts outside the implicated files.

This is what I saw before— system correctness appears non-obvious at the location of the code change.

Results Deep Dive

To understand why the agent failed, I dove into a few example where Claude was unable to fix the issue, even if the exact files needed for the change are given to the agents. Here’s what I found:

PR #23859 is about API token expiration, with the required fix extending the doGenerateNewToken() endpoint signature with a new query param.

The agent stayed entirely within the model/service layer and never crossed into the HTTP handler layer, where the real integration point lived.

This didn’t work.

The non-obvious part is that the feature wasn’t incomplete, it was disconnected at the HTTP handler.

Without this, the UI and tests passed expiration values that were silently ignored, and the new logic was never invoked.

The next, PR #9810 is a issue to prevent deletion of running builds.

The agent added a guard in the CLI command, checking isLogUpdated() before calling delete().

This is a reasonable fix if you’re thinking in terms of the user and their entry points, but it’s not complete.

The correct fix modifies the lifecycle behavior in Run.delete() itself, required to make all code paths become safe.

The non-obvious part is recognizing that the issue is not with a specific interface, but with a missing invariant at the core of the system.

Finally, PR #10494 is a performance update to remove extra checks on the node retention strategies, in updateComputerList.

The agent searched for a place to add a guard to skip the extra work.

That’s the natural approach: the method is doing too much, so restrict it internally.

Unfortunately, that path leads nowhere.

Again, the fix is actually in the interface, updateComputerList which needs a new parameter in the signature that accepts a list of the nodes being updated.

The caller already knows which nodes have updated, while the method does not.

The non-obvious part is understanding state propagation, not just adding a condition where the work happens.

In each case, the agent made a reasonable local change, but each change failed to understand the system behavior enough to solve the problem.

What about a better model?

I reran the “issue only” prompt with the latest model, Claude Opus 4.7 and recorded the results here.

The better model marginally improved the success rate, but the same type of failures remained. Opus 4.7 was able to flip a single example, so 13 passed instead of 12. The same eight tasks failed for both models, including all the examples from above. We see a similar set of mistakes: changes in the wrong place, missing boundaries, incomplete integration. The frontier models are little better at solving problems, but same tasks failed for the same reasons.

This isn’t a random result, multi-shot is unlikely the help, the issue is system understanding. Better models slightly improve the outcome, but don’t remove the failure.

Conclusion

When I used AI to add a feature to a large enterprise repo I failed to meet the expectation that AI made product fixes quick and easy. I applied an even better model to 21 Jenkins issues, and 40% of the time it failed for the same reasons. Frontier models are only slightly better. The agent could find the right files. It could write reasonable code, it just didn’t know where correctness lived in the system.

Programming is making a mental model real. If the model is wrong, the code can’t be right. Sometimes we can read the source code and intuit the model clearly. This is where AI can speed up our work. For another class of changes, truth emerges from running the system, knowing how an undocumented library actually works, or asking another engineer on the team. It’s not obvious to either man or machine.

These failures don’t look like something that disappears with better models, more reasoning steps, or better code search. The problem is knowing how the system works, and that requires a distillation of previous changes, experience with the system, and ultimately information outside the individual files being edited.

To make AI work on these codebases, the system itself has to be legible. Architectural decision records, livings docs and onboarding guides would help solve this problem. Otherwise, we’re asking the model to do something impossible: onboard instantly, discover what isn’t written down, and guess how a local change affects global behavior. That’s something even the best engineers can’t do.

Resources

All the experimental code is available on github