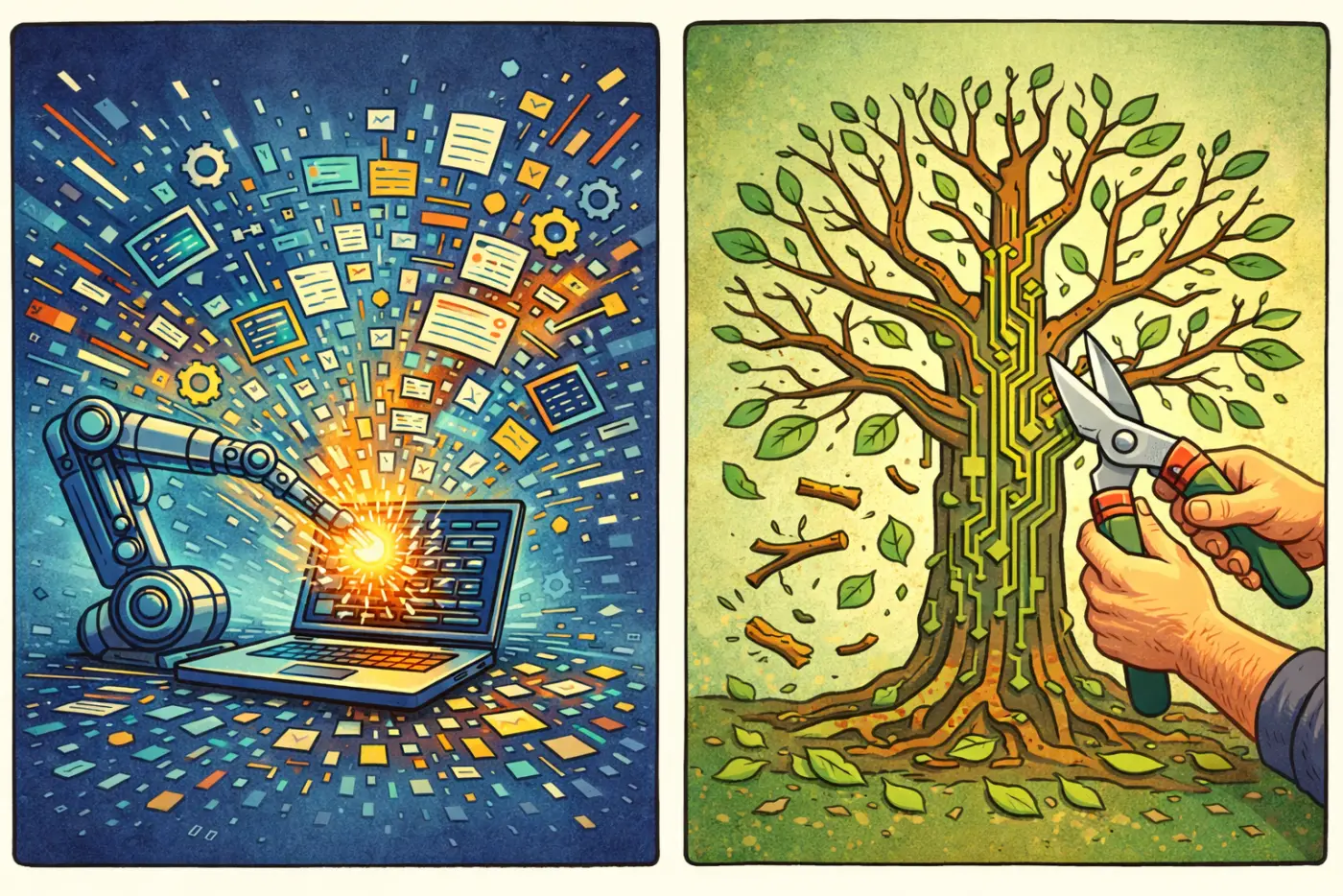

AI is an Expansion Engine. Software Engineering Needs a Pruning Engine

Why LLM Coding Fails Without Selective Pressure

I tried to use AI to build a simple app.

The task wasn’t unusual: a data ingestion flow in a legacy system. A variety of data shapes, a series of transformations, validation logic, and a few API and LLM calls. The kind of project I’ve built, tested, and deployed many times over the last decade.

At first, using AI felt like a jet pack.

Then something strange happened:

- Fixes broke previously working features

- Prompts that worked earlier stopped working

- Unrelated parts of the system started changing

- The codebase grew and became harder to reason about

The more I used the model, the worse the development process became.

It didn’t feel sharp and precise like coding should. It felt like losing control.

Eventually, I realized what was happening:

AI optimizes for expansion. But software development requires convergence.

The loop

I had fallen into a loop:

Prompt → Expand → Patch → Break → Repeat

Each incremental step felt like the next right thing. The system kept growing. But it was not getting simpler, clearer, or more correct.

Why AI fails here

AI makes it cheap to add:

- modules

- interfaces

- abstractions

- edge cases

- new use cases

- fancy types

But it does not make it easy to:

- delete code

- compress abstractions

- reduce scope

- prioritize tradeoffs

- prove something is unnecessary

This matters because software is more than a pile of locally reasonable changes. It is a globally constrained system built for its effects.

LLMs are very good at producing local coherence.

Software requires global constraint.

Each individual addition can look reasonable. But if your process is only additive, it will never converge.

The missing step

The bottleneck isn’t generating code. It’s stopping the model from endlessly generating more.

Developing software requires the discipline to say no: to prune extra features, reject useless abstractions, and remove code that hasn’t earned its place.

Without negative pressure, coding with AI feels like progress while the system quietly drifts toward bloat.

What actually worked

AI coding improved when I forced constraints into the process:

- Write a spec first, then treat it as law

- Lock in the architecture before coding

- Break work into atomic, verifiable steps

- Force the model to restate its understanding

- Track decisions in an append-only log

- Add use-case-linked tests before implementation

In short: constrain the system until it cannot expand arbitrarily.

A concrete example

To test this approach, I built a small project: GitHub and a working demo.

The app is a financial and retirement simulator built to answer a simple question:

For a given retirement strategy, what does uncertainty in market returns actually mean?

To develop the app, I used a document-driven process to codify decisions into immutable artifacts:

- Write a

spec.mdthat defines user behavior and system constraints.

- Create an

architecture.mdto formalize the code structure.

- Have the model produce a

system-understanding-summary.mdto prove it understands the spec.

- Build an

implementation-plan.mdwith small steps, each tied to a verification test and scoped to be a “reasonable ask” for a junior engineer.

- Keep an append-only

decision-log.mdandprogress-log.mdso context lives in files, not in the prompt history.

- Prompt the agent to complete the spec section by section, following the coding rules in

AGENT.md.

The important part was not “using better AI.” It was introducing a structure that puts selective pressure on generative output.

Once I stopped treating the model like an autonomous builder and started treating it more like a junior engineer, the process became much more reliable.

Conclusion

AI is an expansion engine. Software engineering is a pruning process.

Traditional development was constrained by time, effort, performance, and real user needs. These constraints acted as natural filters. Not every idea made it into the system.

LLMs remove these filters. New code is cheap. Ideas are easy to try. Tokens are monetized. Expansion is all but inevitable.

Left unconstrained, this doesn’t produce better systems—it produces drift.

If we want to ship production software, we now have to impose constraints ourselves. We have to create the selective pressure that used to come for free and is now too easy to ignore.

In the end, good software isn’t what you choose to build. It’s what you choose not to keep.