Posted on May 6, 2020

Motivation

Is it possible to compose parsers and state monad transformers with different input and output types? where a parser/state monad is the form:

data M m i o a = M { uM :: i -> m (o, a) }

such that:

given M m A B () and M m B C ()

create M m A C ()

Yes! with Indexed Monads!

If we look at indexed monads, especially indexed state, we notice it’s isomorphic to the state monad, when i eq o. This is especially useful when we want to compose a transfromation, A -> Z through many smaller function applications!

Starting with our trusty old parser, let’s derive an indexed monad in broad strokes, with input/output types, where we can also run IO effects, if need be.

newtype P a = P { unP :: String -> Maybe (a, String) }

newtype P' m a s = P' { unP' :: s -> m (a, s) }

newtype P' m a i o = P' { unP' :: i -> m (a, o) }

Further, since m has kind * -> *, our type P', could be considered a monad transformer once we are able to define a suitable lift function.

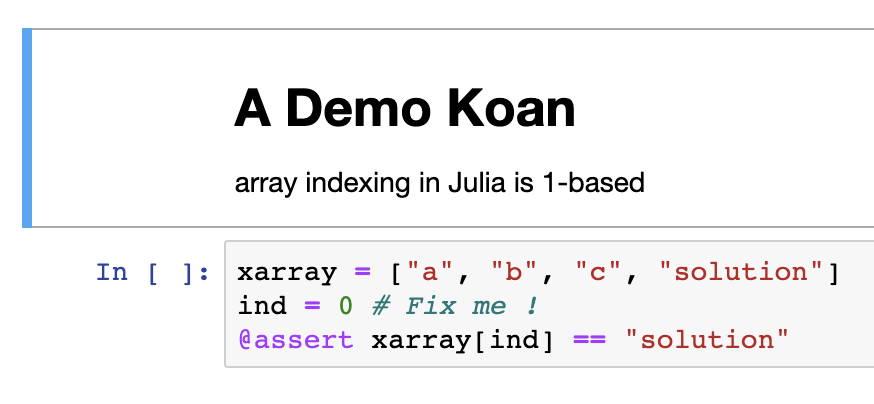

An illustrated example

To demonstrate indexed monads, I’m going to work through an example of how indexed monads can be used to compose a series of transforms through subsequent datatypes, mocking a compiler pipeline with a monad called IxMonadT, which is isomorphic to P' defined above. Full source code available here.

IxMonadT Definition

newtype IxMonadT i o m a = IxMonadT { runIx :: i -> m (a, o) }

Take note that our outer term, and the polymorphic target of our function instance, is going to be a. This could just as well be o ! We also do a slight re-arrangement, putting m after the index types, i and o. The reason for this, is so we can write a MonadTrans instance and be a transformer, although as we will see, we cannot write instance methods for the Haskell Monad typeclass.

Making IxMonadT a Monad

To make our data type a monad, we need to define a return, and a bind. If we try to add an instance of Monad from Control.Monad for IxMonadT, the polymorphic variable will be our a from the newtype definition, and we will have to define the following instance:

(CM.>>=) :: IxMonadT i o m a -> (a -> IxMonadT i o m b) -> IxMonadT i o m b

This is clearly not what we want!

(Note: we are going to use CM to represent qualified imports from Control.Monad…)

Instead, we will write the bind as follows:

(>>=) :: (CM.Monad m) => IxMonadT m i c a -> (a -> IxMonadT m c o b) -> IxMonadT m i o b

(>>=) v f = IxMonadT $ \i -> runIx v i CM.>>= \(a', o') -> runIx (f a') o'

This works to compose an indexed monad from i -> m (a, c) and one of c -> m (b, o) into one of i -> m (b, o) ! However, given the function signature of bind, there is no way to shoe horn this into the Monad typeclass instance, which requires a signature, m a -> (a -> m b) -> m b, so our indexed monad will never satisfy a monad constraint, and we’ll have to be satisfied knowing it’s an enriched category in the monoidal category of endofunctor :)

RebindableSyntax

Given that we cannot use bind, or >>=, as defined in base to work on our IxMonadT, it is still possible to use do blocks via the language pragma, RebindableSyntax. This enables NoImplicitPrelude as well, and ultimately means that do blocks use the bind and return functions are available in locally in scope. Thus, we need to bring in >>= from base as a qualified import, to prevent an ambiguous occurrence error when trying to resolve which >>= to use inside each do block.

Defining the rest of IxMonad Functions

Here are the rest of our IxMonadT functions, credit to Stephen Diehl’s WIWIKWLH article on the same subject. We are still able to get a MonadTrans and Functor instance, although MonadState and MonadIO are not available due to our unique bind signature.

-- traditional monad functions

return :: (CM.Monad m) => a -> IxMonadT s s m a

return a = IxMonadT $ \s -> CM.return (a, s)

(>>=) :: (CM.Monad m) => IxMonadT i c m a -> (a -> IxMonadT c o m b) -> IxMonadT i o m b

(>>=) v f = IxMonadT $ \i -> runIx v i CM.>>= \(a', o') -> runIx (f a') o'

(>>) :: (CM.Monad m) => IxMonadT i c m a -> IxMonadT c o m b -> IxMonadT i o m b

v >> w = v >>= \_ -> w

instance MonadTrans (IxMonadT s s) where

lift :: (CM.Monad m) => m a -> IxMonadT s s m a

lift ma = IxMonadT $ \s -> ma CM.>>= (\a -> CM.return (a, s))

-- MonadIO

liftIO :: CM.MonadIO m => IO a -> IxMonadT s s m a

liftIO = lift . CM.liftIO

-- MonadState

put :: (CM.Monad m) => o -> IxMonadT i o m ()

put o = IxMonadT $ \_ -> CM.return ((), o)

modify :: (CM.Monad m) => (i -> o) -> IxMonadT i o m ()

modify f = IxMonadT $ \i -> CM.return ((), f i)

get :: CM.Monad m => IxMonadT s s m s

get = IxMonadT $ \x -> CM.return (x, x)

gets :: CM.Monad m => (a -> o) -> IxMonadT a o m a

gets f = IxMonadT $ \s -> CM.return (s, f s)

-- eval/exec the transformer

evalIxMonadT :: (CM.Functor m) => IxMonadT i o m a -> i -> m a

evalIxMonadT st i = fst <$> runIx st i

execIxMonadT :: (CM.Functor m) => IxMonadT i o m a -> i -> m o

execIxMonadT st i = snd <$> runIx st i

instance (CM.Monad m) => CM.Functor (IxMonadT i o m) where

fmap :: (CM.Monad m) => (a -> b) -> IxMonadT i o m a -> IxMonadT i o m b

fmap f v = IxMonadT $ \i ->

runIx v i CM.>>= \(a', o') -> CM.return (f a', o')

Thus, we have an indexed monad transformer that can compose state monads!

Pipeline Components

Back to our example, for our example computation of many small steps, let’s say we want to start with SourceCode, and produce a Core. We have the following types, as part of a compilation pipeline for the programming language Arbitrary.

newtype SourceCode = SourceCode Text

newtype Tokenized = Tokenized [Text]

data Expr = EInt Int | EStr Text | EVar Text | EApp Expr Expr deriving (Show)

newtype Syntax = Syntax { unSyntax :: Expr } deriving (Show)

newtype Core = Core { unCore :: Expr } deriving (Show)

Here we can see the transformation functions: For the sake of this demonstration, I just used coerce, or stubbed out a const function.

source2Toke :: SourceCode -> Tokenized

source2Toke (SourceCode txt) = Tokenized [txt]

toke2Syntax :: Tokenized -> Syntax

toke2Syntax _ = Syntax $ EApp (EVar "Fn") $ EInt . fromIntegral $ 42

syntax2Core :: Syntax -> Core

syntax2Core = coerce

Putting it all together!

For our demonstration, we will take our SourceCode data type, and generate some Core, using the previously defined transformation. Additionally, we’ll run and lift an IO action to show the results of running the pipeline.

run :: IxMonadT SourceCode Core IO Core

run = do

toke <- gets source2Toke -- :: IxMonadT SourceCode Tokenized IO ()

liftIO $ putStrLn "inside IxMonad" -- :: IxMonadT Tokenized Tokenized IO ()

syn <- gets toke2Syntax -- :: IxMonadT Tokenized Syntax IO ()

modify syntax2Core -- :: IxMonadT Syntax Core IO ()

result <- get -- :: IxMonadT Syntax Core IO Core

-- with get we can manipulate the value of the transformation

liftIO $ print result -- :: IxMonadT Syntax Core IO Core

return result -- :: IxMonadT SourceCode Core IO Core -- (final type)

main :: IO ()

main = do

let srcCode = SourceCode "here is my source code"

in execIxMonadT run srcCode CM.>> print "done"

Great! The main property we want here is that our IxMonadT function run goes from SourceCode to Core, and we are able to do through indexed monad composition! We also get interleaved IO effects, and the convenience of do notation!

Where can we go from here?

The inspiration for this idea was to refactor ghc’s Stream.hs module in a type safe way, such that refactoring other parts of the compiler will not cause a major issue. The source code for Stream.hs is available here. However, the way stream is written, our indexed monad type is not a drop in replacement. (Side note: Steam.hs, as written is not a true steam like Condiut or Pipes).

Inspiration

As for proper uses of indexed monads, there are a few that I think are worth a mention:

- Squeal uses indexed session monads for tracking schema migrations. (Shout out to Eitan for help with this article!).

- Session Types Which uses a phantom parameter as an index to “protect” resources like file handles or other resources.

- JSONTest is an indexed monad approach to testing, where your index type variables represent an abstract datatype to encode into json, the result of extracting a value, and what that value should be. Note, this is the only library does use rebindable syntax.

- Ian Malakhovski’s thesis, p. 108-109 An example of an indexed monad with error handling defined via

throw and catch, which is a pretty interesting idea for the application developed in the beginning of the article, considering the propensity for compilation to fail!

- Control.Monad.Indexed Kmett’s indexed monad library, a good reference for finding definitions and function signatures.

Conclusion

In this article, we’ve defined an indexed monad transformer, and employed it to run a mock compiler transformation pipeline with interleaved IO effects. Although we can write all the needed methods for Monad, MonadState, and MonadIO, our definition of >>= prevents us from being an instance of these classes. This is unfortunate, as we can use our IxMonadT just like a monad, and it’s bind has a basis in category theory to give use lawfulness. The other consequence is that if your application is using “tagless final” or “ReaderT” patterns, you will not be able to provide a integrate IxMonadT into your monad transformer, since we cannot define constrains like MonadReader.

On using RebindableSyntax, I’m not sure I would advocate using this extension outside of library code, and the ambiguity that is caused when trying to interleave a locally defined >>=, say the one for IxMonadT, and the one from your favorite prelude can be frustrating. That said, there are libraries mentioned in my inspiration that fall on both sides of the issue, JSONTest uses the pragma well, while Squeal does not, and Eitan defines a typeclass IndexedMonadTrans with a bind function, pqBind.

Due to these restrictions, I believe the best way to derive benefit out of indexed monads is to use them in an encapsulated manner to model a specific problem. This gives the library author the benefit of indexed type variables for additional type safety and composition, and avoids exposing the end library the clunky ergonomics of RebindableSyntax.

]]>